Leilani H. Gilpin

Assistant Professor

UC Santa Cruz

I am an Assistant Professor in Computer Science and Engineering and an affiliate of the Science & Justice Research Center at UC Santa Cruz. I am part of the AI group @ UCSC and I lead the AI Explainability and Accountability (AIEA) Lab.

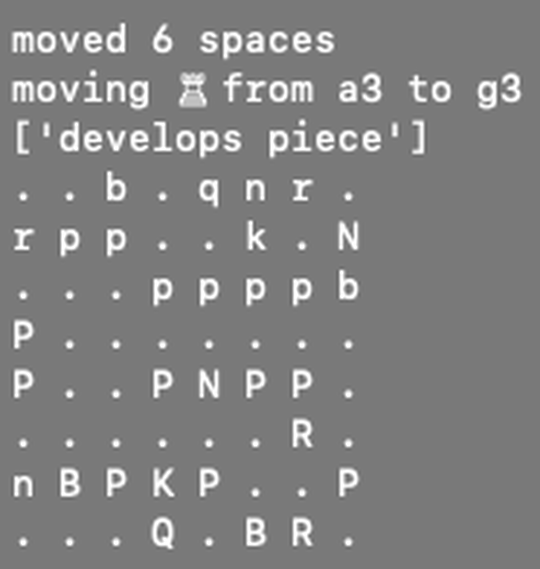

Previously, I was a research scientist at Sony AI working on explainability in AI agents. I graduated with my PhD in Electrical Engineering and Computer Science at MIT in CSAIL, where I continue as a collaborating researcher. During my PhD, I developed “Anomaly Detection through Explanations” or ADE, a self-explaining, full system monitoring architecture to detect and explain inconsistencies in autonomous vehicles. This allows machines and other complex mechanisms to be able to interpret their actions and learn from their mistakes.

My research focuses on the theories and methodologies towards monitoring, designing, and augmenting complex machines that can explain themselves for diagnosis, accountability, and liability. My long-term research vision is for self-explaining, intelligent, machines by design.

Interests

- Explainable AI (XAI)

- NeuroSymbolic AI

- Anomaly Detection

- Commonsense Reasoning

- Anticipatory Thinking for Autonomy

- Semantic Representations of Language

- AI & Ethics

Education

-

PhD in Electrial Engineering and Computer Science, 2020

Massachusetts Institute of Technology

-

M.S. in Computational and Mathematical Engineering, 2013

Stanford University

-

BSc in Computer Science, BSc in Mathematics, Music minor, 2011

UC San Diego