Vahid Ganjalizadeh

A Ph.D. stduent at UCSC in Electrical and Computer Engineering. Enjoys working with photonics and codes to do things!

I'm a last year ECE Ph.D. candidate at UCSC. I did my bachelor's and master's at University of Tabriz in my beautiful hometown, Tabriz. I joined Applied Optics group in Jack Basking School of Engineering (JBSOE) under supervision of Prof. Holger Schmidt.

I have been lucky to study at UCSC with it's spectacular nature. When I don't research, I enjoy being outdoor and shooting photos. Check my small gallery section below.

Working with lasers and light in the dark is an irony and contrast to find new things!

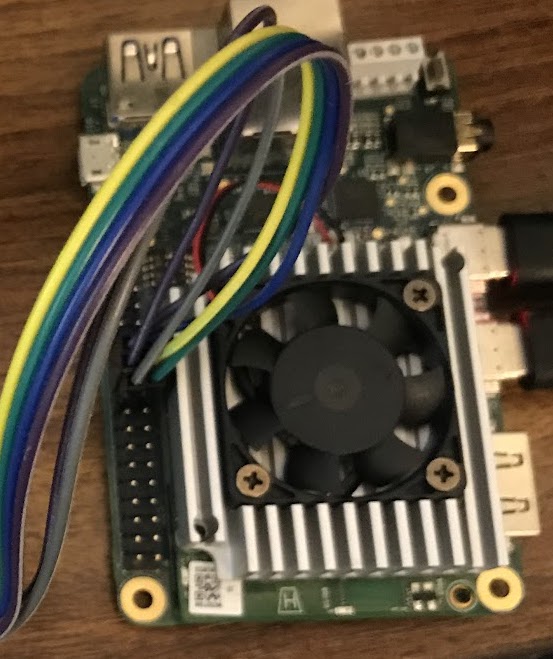

In this project, I have developed a Deep-Learning model to improve fluorescent event detection accuracy. I use TensorFlow library in Python to design, compile, and train a neural-network model for event classification purpose. The trained model is then transferred to the Edge-TPU accelerator hardware (Google Coral Dev Board) for real-time inferencing. I built the quantization-aware model from the scratch with required considerations to be compatible with Coral Dev Board. I also developed a pipeline to automate the process of dataset preparation for traning step. A Plotly Dash dashboard was implemented for real-time monitoring of results. To achieve real-time performance, I programmed an FPGA to bin photon events and transfer via Ethernet to the host machine.

I've been working on developing machine-learning model for image processing purposes for a couple of projects. I use convolutional neural networks (CNN) to extract coarse and fine features from input images to map into another space to do regression and/or classification. These are on-going projects and I will share more details in the near future.

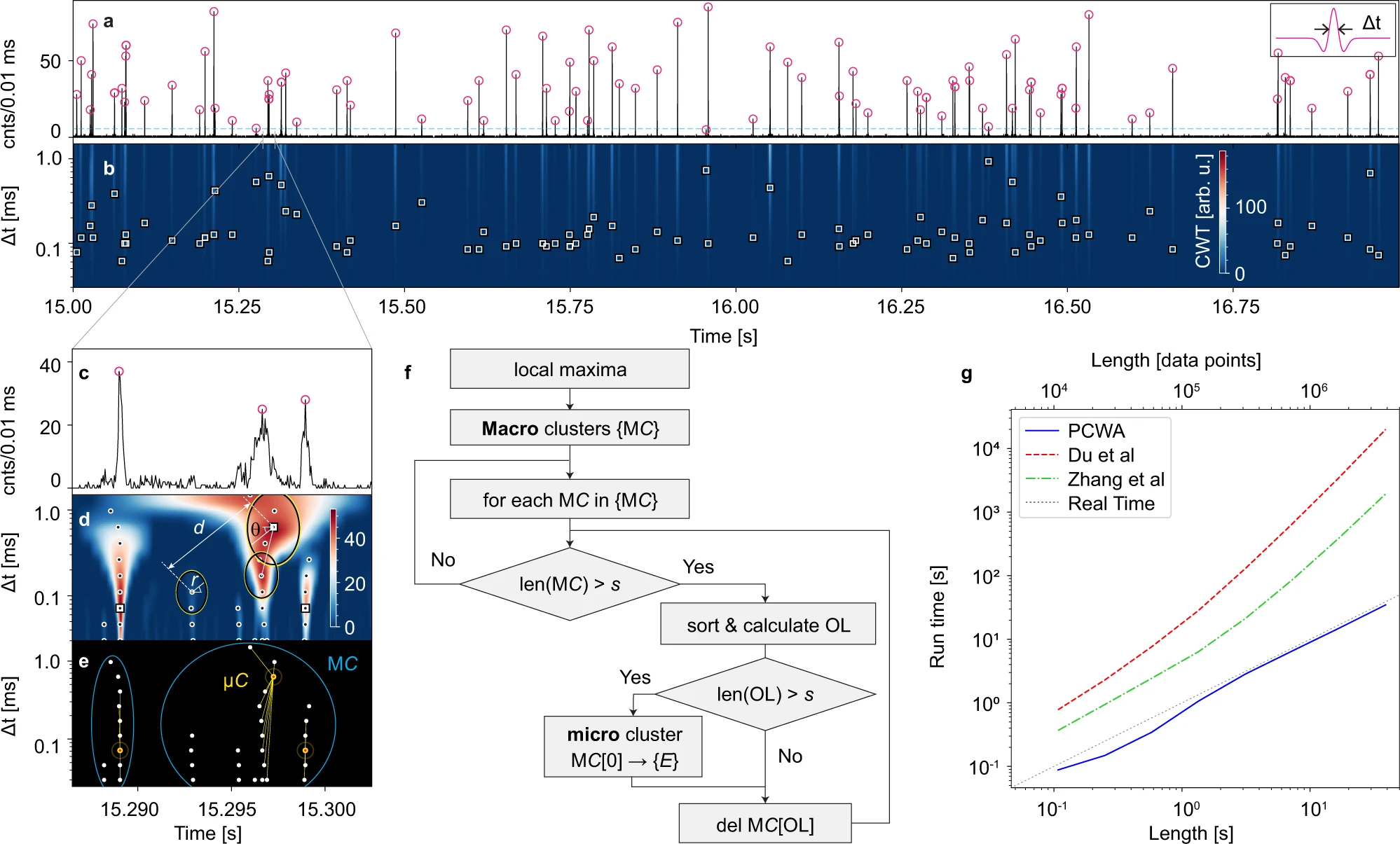

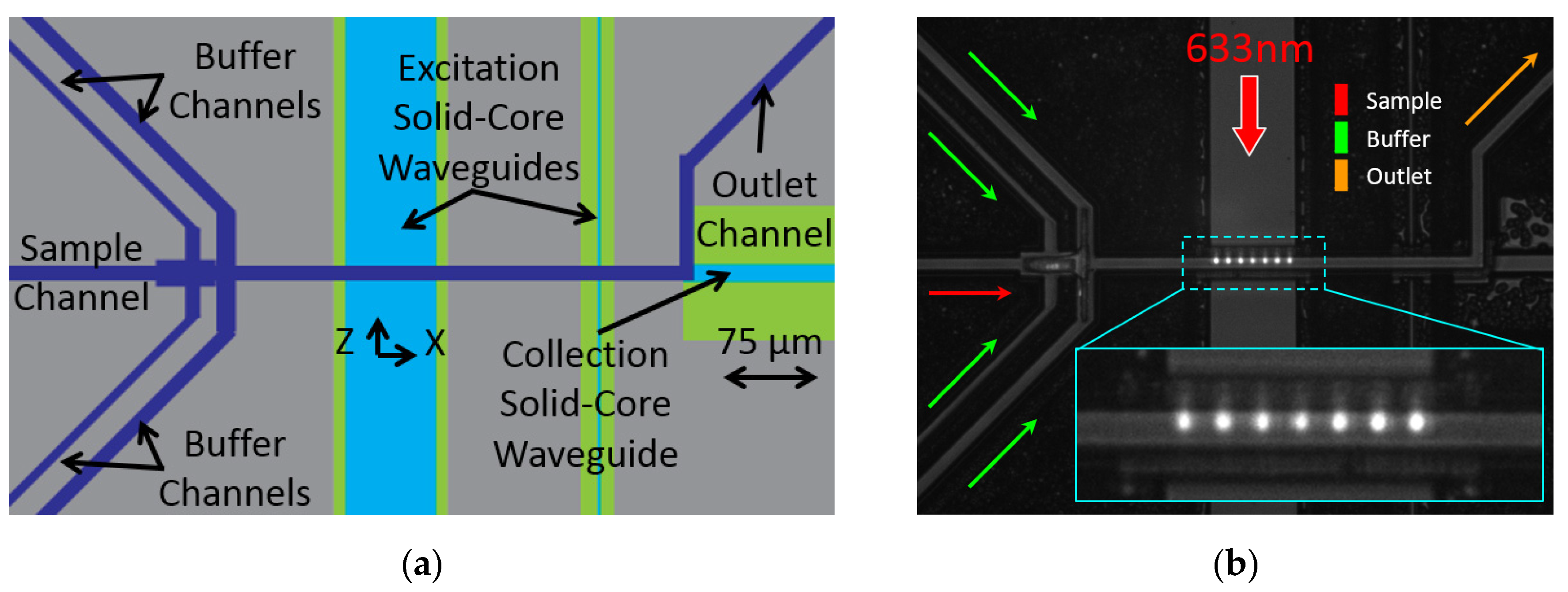

Optofluidic chips are developed to detect individual flowing targets within a fluidic medium. Integration of photonics and microfluidics optofluidics well-suited for single-molecule detection. In this research, I developed a novel event detection algorithm based on continuous wavelet transform (CWT) which is a powerful multiscale data analysis tool. A custom designed mother wavelet function is designed and applied on the raw binned input signal from single-photon-counting-module (SPCM) to improve detection rate and accuracy. The algorithm is highly parallel and can run much faster than previous CWT peak-finder algorithms, which can run in real-time for inline event detection and monitoring [ref].

PCWA algorithm have been used in various event detection applications in our lab. I have implemented this algorithm in a more complicated platform where real-time detection of events and concentration calculation is necessary. There is GUI (using DearPyGUI) developed to have inputs read from the user and visualize real-time insight about running experiment.

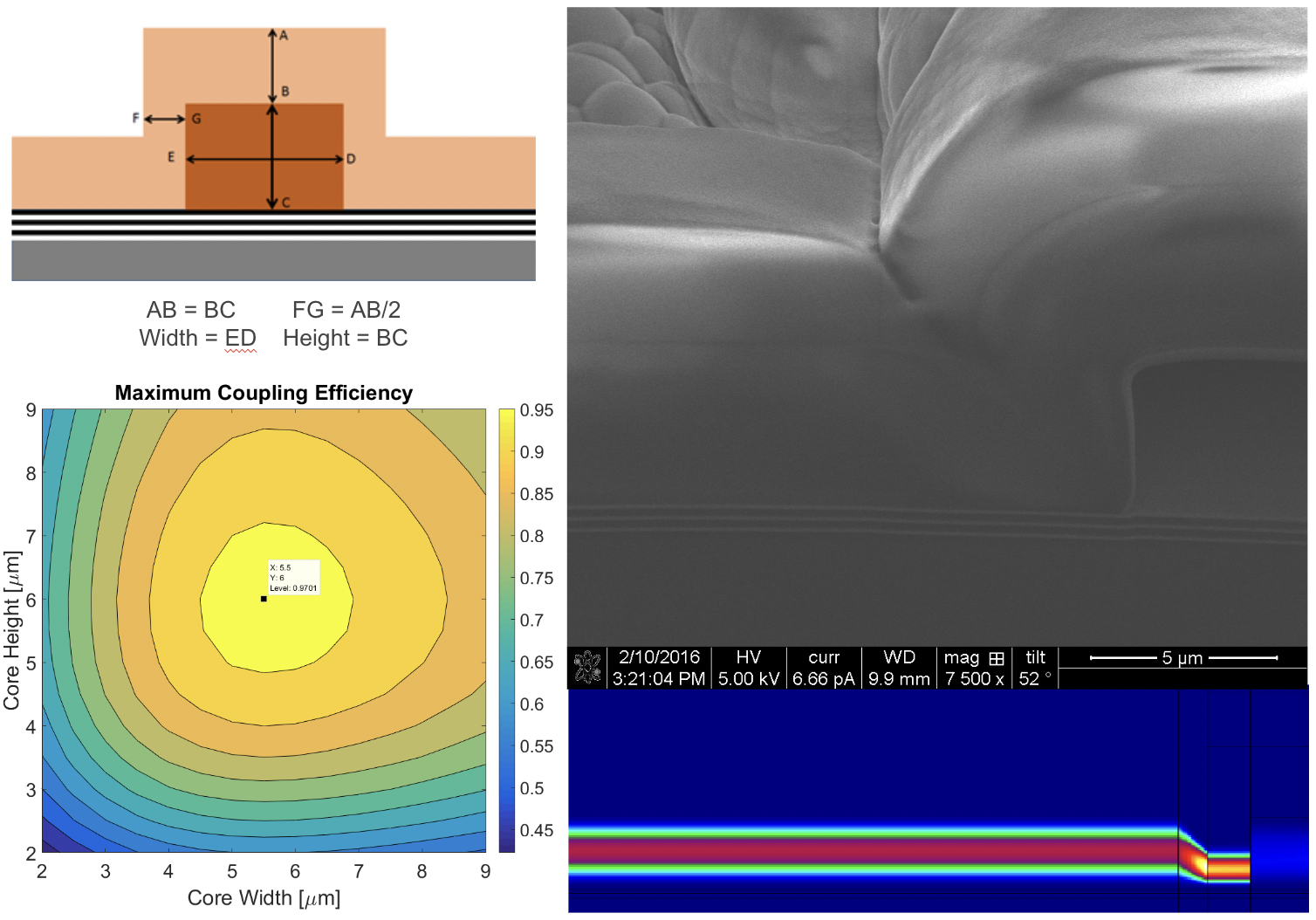

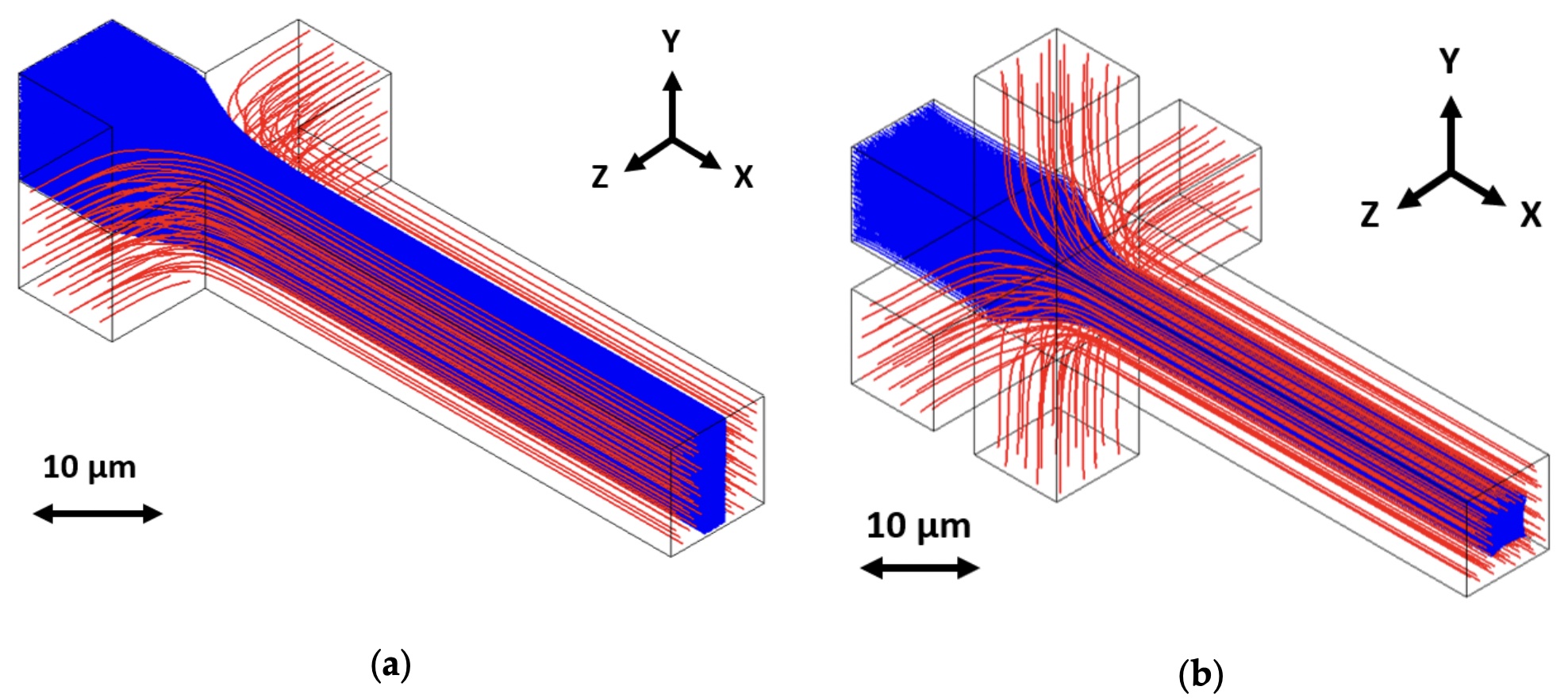

I have taken Photon Design training sessions to learn how to model and simulate different types of waveguides. I use FIMMWAVE for mode calculation and model list build. FIMMPROP is used to calculate mode overlaps and propagation along waveguide. This way we calculate coupling efficiency, loss, and mode confinement ath the desired location. Fig. 4 shows an example of design optimization. Here, I wrote a Python script to automate multiple variable sweeps and store S-Matrix outputs into a log file. We can then pick the sweat spot with tolerance considerations. The model contains a tapered/crevice section which was discovered in the actual fabricated devices by doing a focused ion beam (FIB) milling.

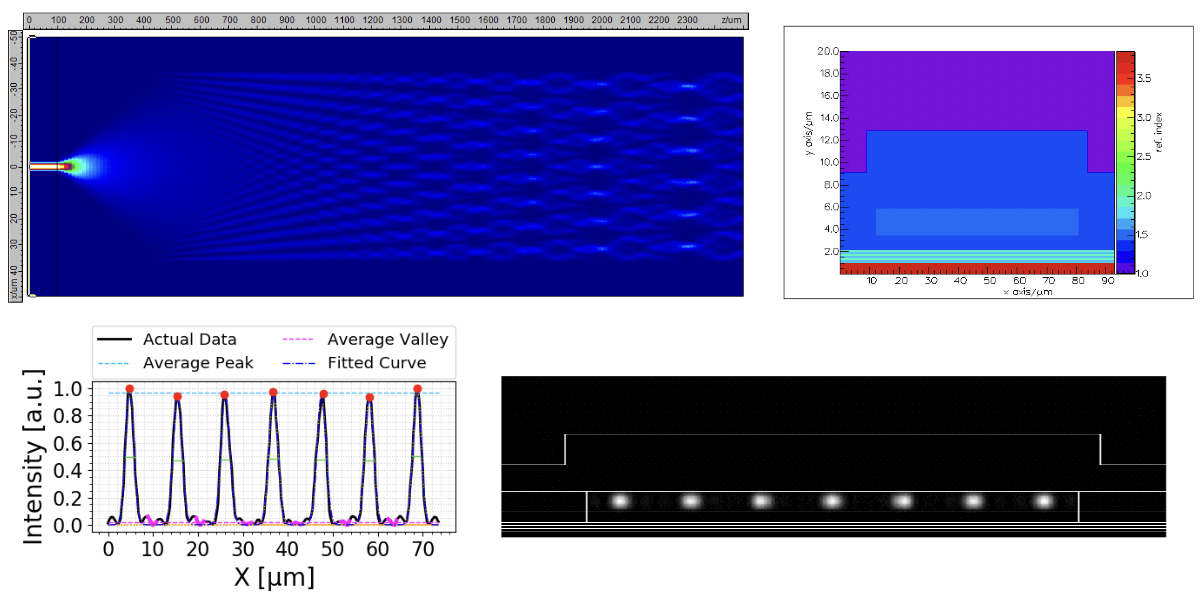

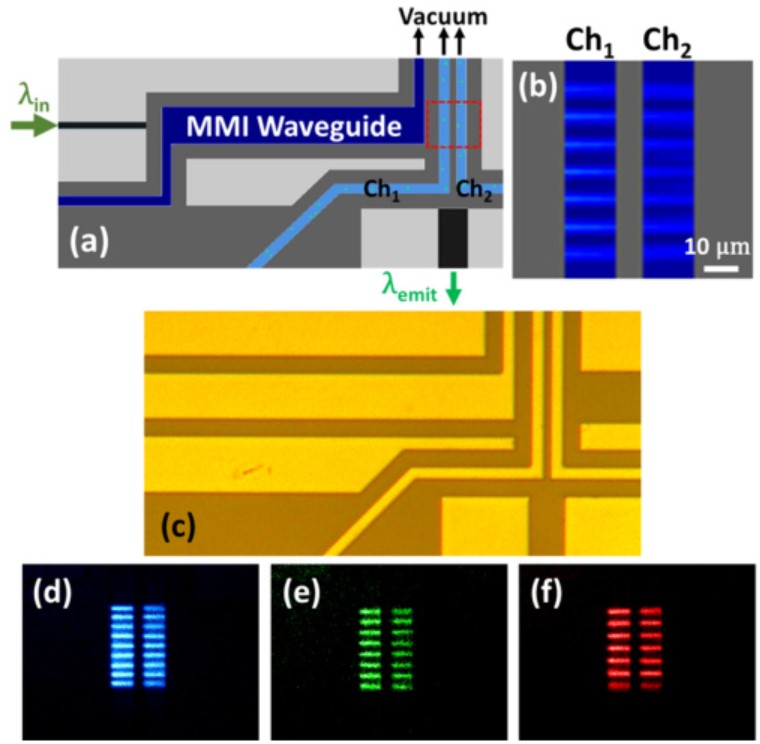

Fig. 5 shows another example in which I did the simulation for a designed multi-mode interference (MMI) waveguide to achieve a high contrast multi-spot pattern for multiplexed bio-sensing application. Interference pattern is calculated using FIMMPROP engine.

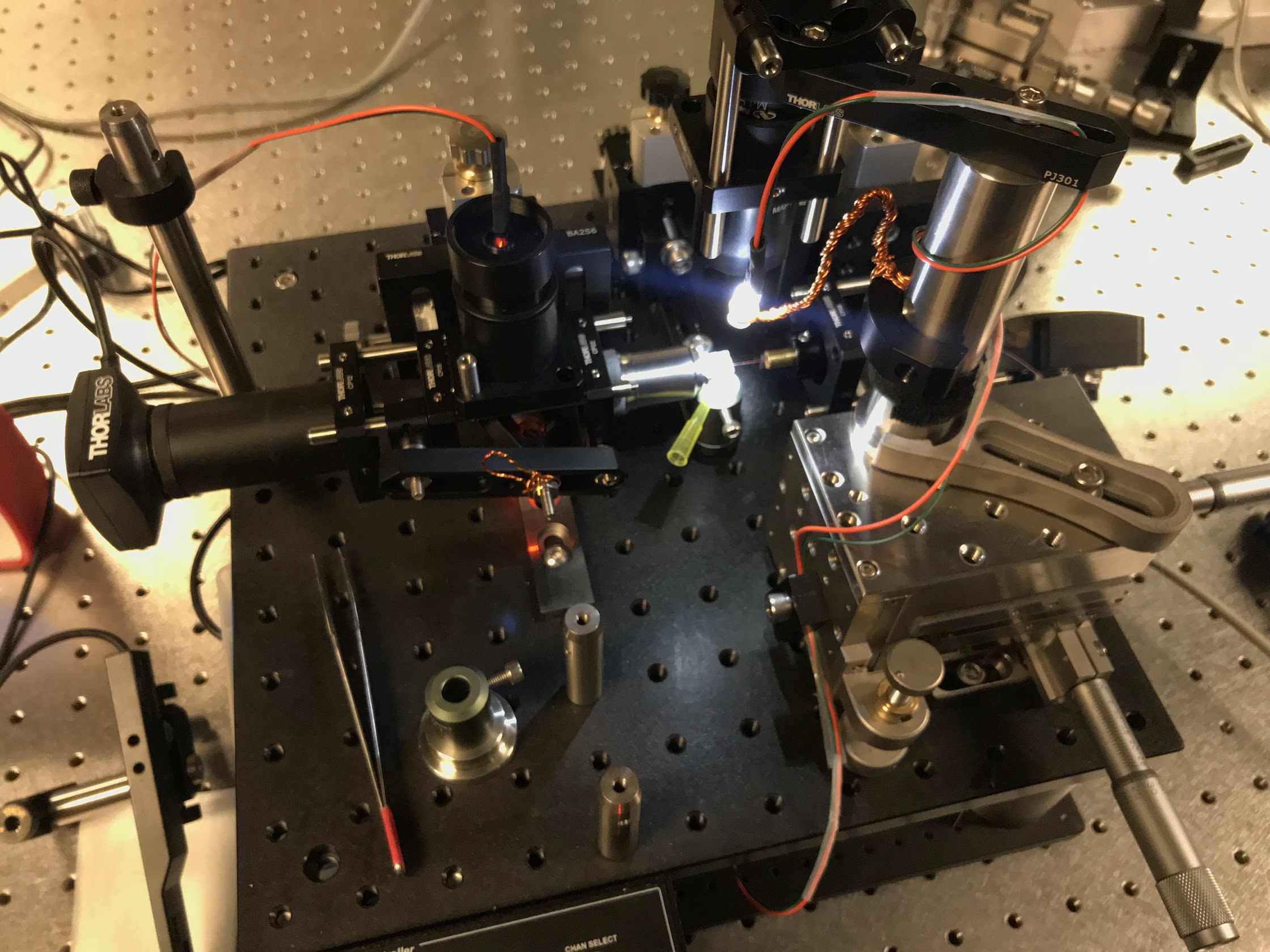

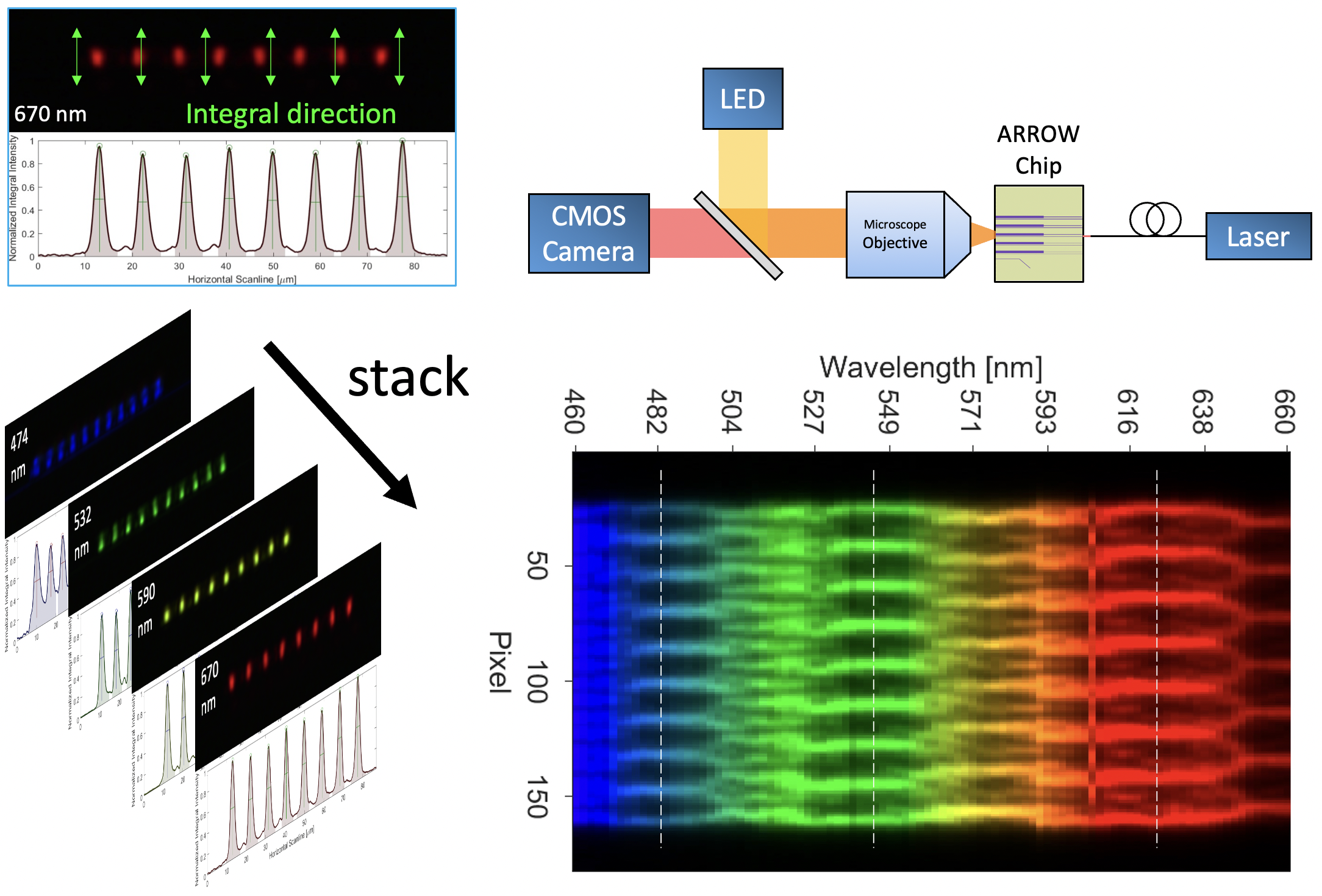

In this project, I have characterized various single-layer SiO2 low-index waveguides fabricated on anti-resonant reflecting optical waveguide (ARROW) layer to measure the quality of multi-spot interference pattern. To help with batch characterization, I have built a mode-imaging setup with a C# GUI software, where the top-down as well as side facet live images are displayed using two CMOS cameras. The software is also capable of communicating with NKT SuperK EXTREME supercontinuum white light laser and SuperK SELECT to tune and scan wavelengths.

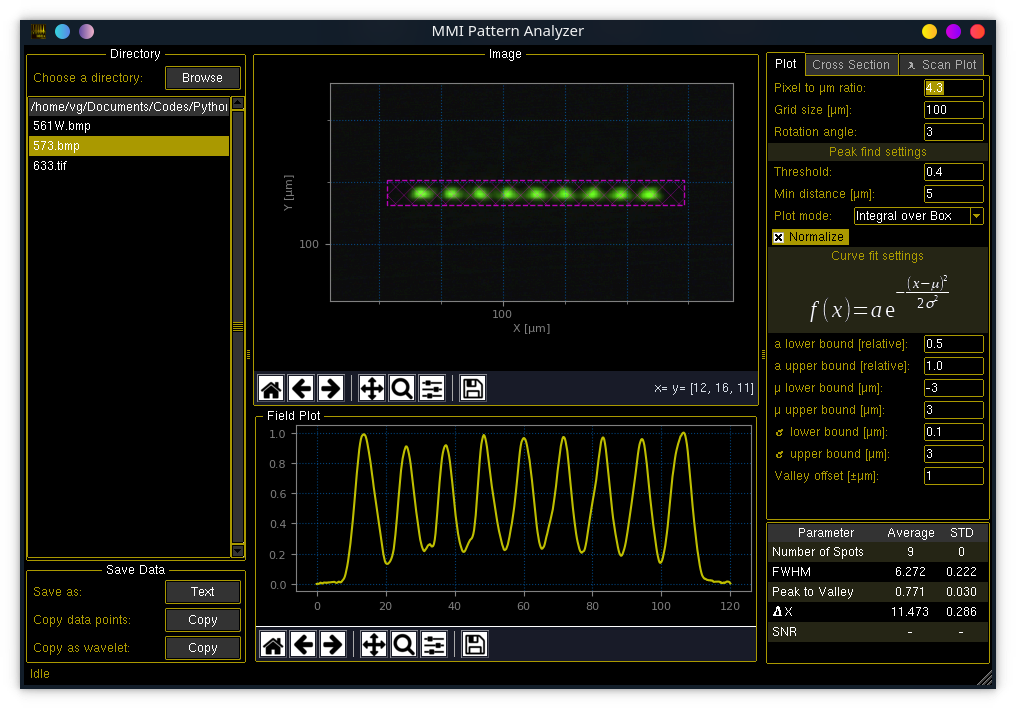

To analyze recorded mode images, I developed an image processing program (Mode-Analyzer) to convert multi-spot facet images into a 1D array and then extract multiple features such as full-width-half-maximum (FWHM), peak-to-valley (P2V), etc. The program is written in Python and the GUI is developed in Tkinter.

Polydimethylsiloxane (PDMS) is a biocompatible, flexible transparent elastomer which have been used in microfluidics and bio-sensors for decades. Desirable properties of this material for microfluidic applications combined with the optical properties control during fabrication, promotes PDMS as a favorable material for optofluidics. I have gained skills and experiences fabricating optofluidic devices using in-house cleanroom facility at BSOE. I use AutoCAD for photomask design and to ease the polyline verification as a design rule check (DRC) process for comercial mask-writer instruments, I have developed a lisp script called Poly Hatch.

Hydrodynamic focusing (HDF) in fluidics help to confine the sample stream by introducing sheath streams surrounding it. With the help of sacrifical layers, 3D-HDF integration was achieved within the ARROW optofluidic chip.

This simulation was done for EE-216 (Nanomaterials and Nanometer-scale Device with Prof. Nobuhiko P. Kobayashi) class where we have to simulate deposition of atoms on a predefince surface. We (Yucheng Li, Md Nafiz Amin, and me) used L-J potential model to calculate attractive and repulsive forces each atoms will feel when introducsed into the sample. The visualization and the tensor calculations are done in PyOpenGL and Numpy respectively. Furthur information are availabe at my github repository.